|

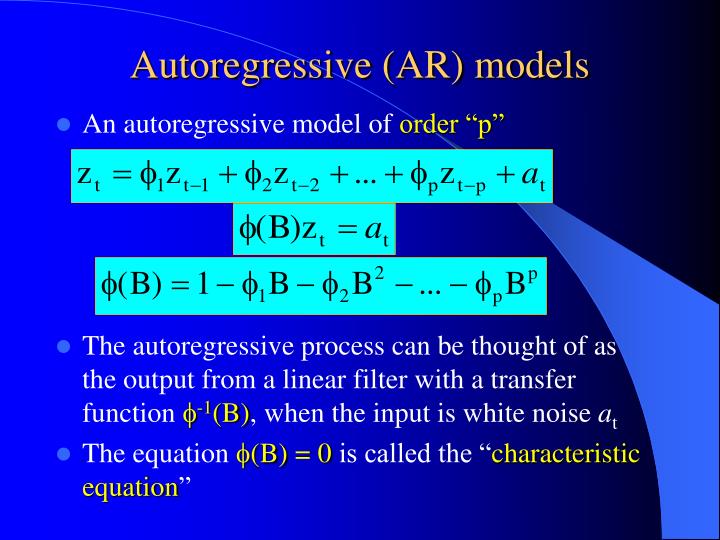

The model estimated by the adaLASSO delivers superior forecasts than traditional benchmark competitors such as autoregressive and factor models. Finally, we consider an application to forecast monthly US inflation with many predictors. Therefore, an iteratively reweighted adaptive lasso algorithm for the estimation of time series models under conditional heteroscedasticity is presented in a high-dimensional setting. A simulation study shows that the method performs well in very general settings with t-distributed and heteroskedastic errors as well with highly correlated regressors. However, currently lasso type estimators for autoregressive time series models still focus on models with homoscedastic residuals. This allows the adaLASSO to be applied to a myriad of applications in empirical finance and macroeconomics. We show the adaLASSO consistently chooses the relevant variables as the number of observations increases (model selection consistency) and has the oracle property, even when the errors are non-Gaussian and conditionally heteroskedastic. mal subset ARMA model by fitting an adaptive Lasso re. In other words, we let the number of candidate variables to be larger than the number of observations. lags and the lags of the residuals from a long autoregression fitted to the time series data. We derive theoretical results establishing various types of consistency. We adopt a double asymptotic framework where the maximal lag may increase with the sample size. In this paper, we study the Lasso estimator for fitting autoregressive time series models. In this paper, we study the Lasso estimator for fitting autoregressive time series models. The Lasso is a popular model selection and estimation procedure for linear models that enjoys nice theoretical properties. We assume that both the number of covariates in the model and the number of candidate variables can increase with the sample size (polynomially or geometrically). Autoregressive Process Modeling via the Lasso Procedure. penalty for both fitting and penalization of the coefficients. J Multivar Anal 102(3):528549 Tibshirani R (1996) Regression shrinkage and. The adaLASSO is a one-step implementation of the family of folded concave penalized least-squares. Therefore, we introduce a new estimation procedure which adapts the weighted M-estimation to environmental time series data, while selecting optimal value for. It selects a reduced set of the known covariates for use in a model. Rinaldo A (2011) Autoregressive process modeling via the Lasso procedure. In this paper, we study the Lasso estimator for tting autoregressive time series models.

We study the asymptotic properties of the Adaptive LASSO (adaLASSO) in sparse, high-dimensional, linear time-series models. The Lasso is a popular model selection and estimation procedure for linear models that enjoys nice theoretical properties.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed